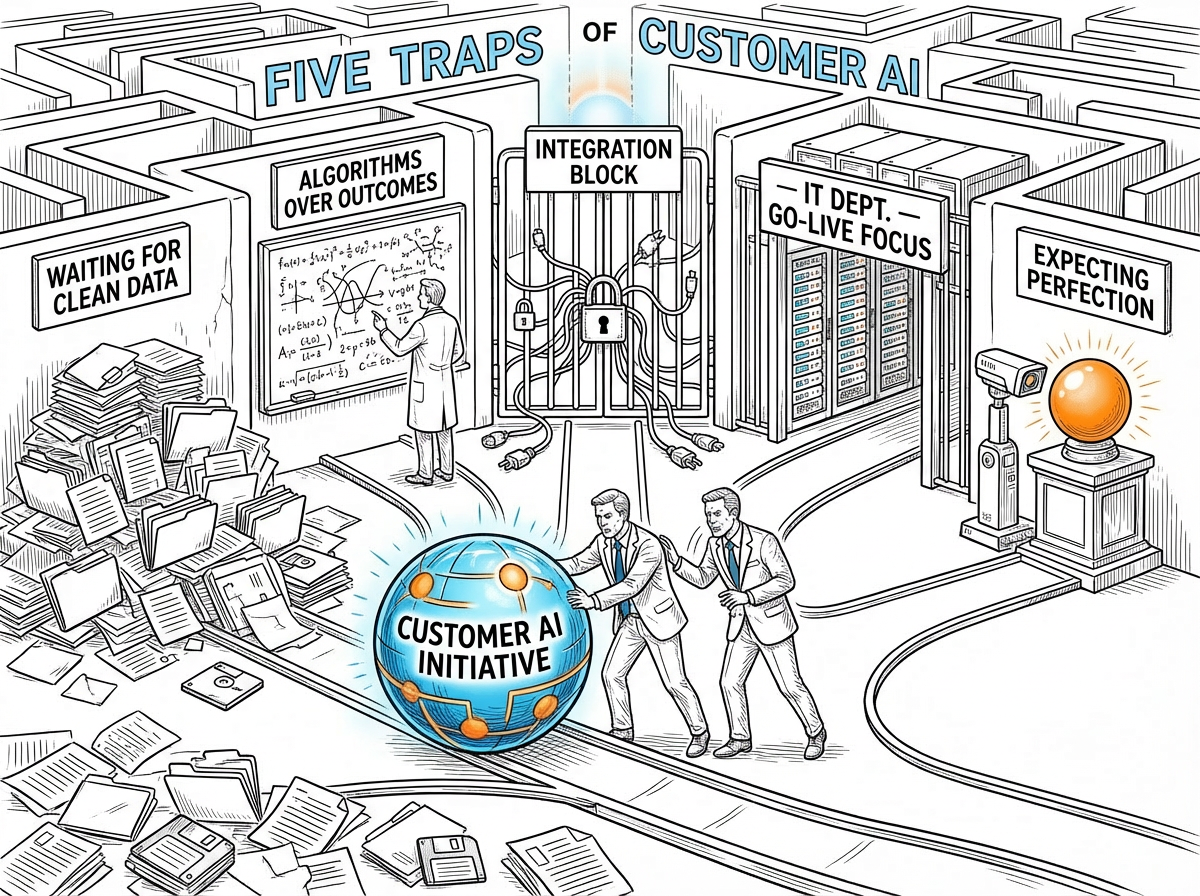

Five Implementation Traps We See Again and Again

By Richard Owen & Maurice Fitzgerald

Field Notes on Customer AI · Edition 001 · April 28, 2026

Each Tuesday, Field Notes surfaces what we're seeing in the field: patterns from implementations, ideas worth stress-testing, and the occasional inconvenient truth about how Customer AI programs succeed or stall. No abstractions. No product pitches. Just the working knowledge that tends to matter.

This edition covers something we encounter often enough that it deserves a direct treatment: the five ways Customer AI programs get derailed before they ever generate value. Not theory: these are the recurring traps we watch companies walk into.

The Field Read

The pattern that keeps repeating - Richard Owen

Most Customer AI programs do not fail because the idea was wrong. They fail because of how the idea gets introduced into the organization.

The concept is sound. The economics are strong enough that you could think of Customer AI as one of the better-substantiated investments a CX leader can make right now. Yet programs stall, underperform, or get quietly shelved before producing a single actionable prediction. When you look at what actually went wrong, five patterns show up with uncomfortable consistency.

The first is waiting for clean data. No enterprise has clean data. That is precisely what Customer AI is designed to handle. Waiting for the warehouse to be ready is not caution; it is an opportunity cost that compounds in every account that churns while the project is still being scoped.

The second is letting data science set the agenda rather than business leaders. When the conversation orients around models and algorithms instead of the question that actually matters: which accounts are at risk and what should we do about them? Domain expertise outweighs algorithmic sophistication every time.

The third is allowing integration to block the start. Integration is the right destination; it is not the right starting point. The strongest programs run their first year without it.

The fourth is housing the initiative under IT. When IT owns it, success gets defined as go-live rather than revenue outcome. Business leaders disengage, adoption stalls, and the program becomes an infrastructure project that nobody in finance is tracking.

The fifth is expecting the model to be right on day one. Customer AI reaches high precision through iteration, not through waiting. Programs achieving 88%+ accuracy got there by running, learning, and refining.

The thread connecting all five: treating Customer AI as something that needs to be finished before it can be useful. It doesn't. It needs to be started.

The Practitioner's Take

What this looks like inside a large enterprise - Maurice FitzGerald

Richard's five traps are real. Let me tell you what the fourth one looks like from inside a large company.

At HP Software, I watched a promising customer analytics initiative get routed through the enterprise architecture review board. Good governance on paper. In practice, it meant the project joined a queue, acquired an IT sponsor, received a go-live date eighteen months out, and lost the attention of every business leader who had championed it. By the time the system was ready, the business case was stale and the executive sponsor had moved to a different role.

The pattern is common. In any large organization, IT governance is designed to manage risk. It is not designed to move fast on something whose value comes from iteration. Customer AI produces its first useful predictions in weeks, not years. Routing it through an annual planning cycle is like putting a speedboat into dry dock before anyone has checked whether it floats.

So therefore: if your Customer AI initiative sits under IT or data science, identify the business leader who should own the outcome. Not the technology. The revenue result. Run the first ninety days as a business-funded pilot and let the early predictions do the persuading.

Richard has also written a compelling article on the challenges of implementing Customer AI across fragmented business silos. This is the 'integration' challenge he refers to above. The title of his article is 'The Tyranny of the Org Chart' and you can find it here.

The Field Tactic

Three moves this week

Three things to do this week if Richard's five traps feel familiar.

1. Run a trap audit. Take each of the five traps and score your initiative honestly. Are you waiting on data, waiting on integration, or waiting for perfect predictions before showing results to leadership? One honest hour on this exercise saves months of stall.

2. Reframe ownership. If your Customer AI program currently sits under IT or data science, identify the business leader who should own the revenue outcome, not the technology. Then have the conversation about what that shift requires. It is usually shorter than people expect.

3. Set a 90-day value milestone in writing. Agree with your executive sponsor on one specific, measurable result in ninety days: a prediction on a defined account set, a churn-risk ranking, an expansion opportunity surfaced. Getting it on paper moves the program from concept to commitment.

The Data Point

The number nobody talks about

90%

That is the failure rate of homegrown AI initiatives, a figure documented consistently across enterprise technology cycles from CRM to ERP to Customer AI. Research from Cornell and the University of Toronto identifies a recurring cause: internal data science teams frame these as technical problems rather than business transformation challenges, and lack the domain expertise to know which signals actually predict customer outcomes.

The companies that recover fastest from that failed investment stop building from scratch and deploy purpose-built solutions with embedded domain knowledge.

Source: Cornell University / University of Toronto enterprise AI implementation research

The Iconoclast Question

This week's provocation

If your Customer AI program stalled tomorrow, would anyone in your finance team notice? Or is the business case still living only in the minds of the people who built it? Reply with what it would take to make the program visible at that level.

The Field Bridge

The Customer AI Masterclass covers what this issue describes: the implementation traps and how to avoid them. Unit 7 walks through what works.

[ Get Instant Access → Click here]

Coming in future editions

-

The Sound of Silence.

-

The Cost of Looking Backwards.

-

The Tyranny of the Org Chart.

-

Iceberg Dead Ahead? Customer Portfolio Management is Not a Game.

-

Prevention Economics.

Field Notes publishes every Tuesday. Each edition focuses on one topic — a trap, a framework, a field observation, or a pattern worth examining. If something in here resonates, or if you're seeing something different in your own programs, we'd like to hear about it.